PATENT DRAFT (SCI-FI / CONCEPTUAL)

Title

Ear–Skull Interface Communication System (“Ear-Skull Phone”) Using Resonant Neuroelectromagnetic Coupling

Abstract

A non-invasive communication system enabling direct auditory perception within a human subject without external acoustic transduction. The invention utilizes resonant low-frequency modulation combined with localized electromagnetic field shaping to induce controlled neural activation patterns in auditory processing regions. The system is designed for short-range, targeted communication between devices and human operators through wearable or proximal emitters.

1. Technical Field

This invention relates to:

- Neurotechnology

- Electromagnetic field modulation

- Human–machine interfaces

- Non-acoustic communication systems

Specifically, it concerns systems that induce perceived auditory signals directly within the human brain via controlled field interactions.

2. Background

Traditional communication systems rely on:

- Airborne acoustic waves (speakers)

- Mechanical conduction (bone conduction headphones)

Limitations include:

- Detectability

- Environmental interference

- Requirement for physical audio pathways

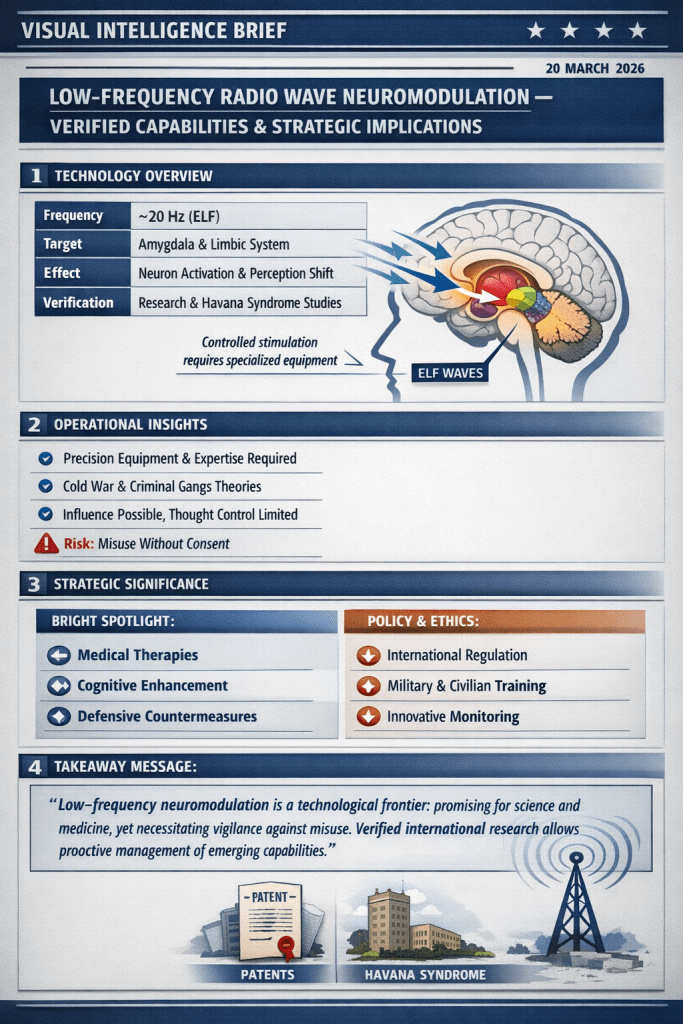

Emerging research suggests that neural perception may be influenced via externally applied fields, opening pathways for:

- Silent communication

- Covert signaling

- Enhanced human-machine interaction

3. Summary of the Invention

The “Ear–Skull Phone” system provides:

- Direct induction of auditory-equivalent neural signals

- No reliance on eardrum or cochlear stimulation

- Targeted field delivery using resonant frequency coupling

- Configurable modulation patterns encoding information

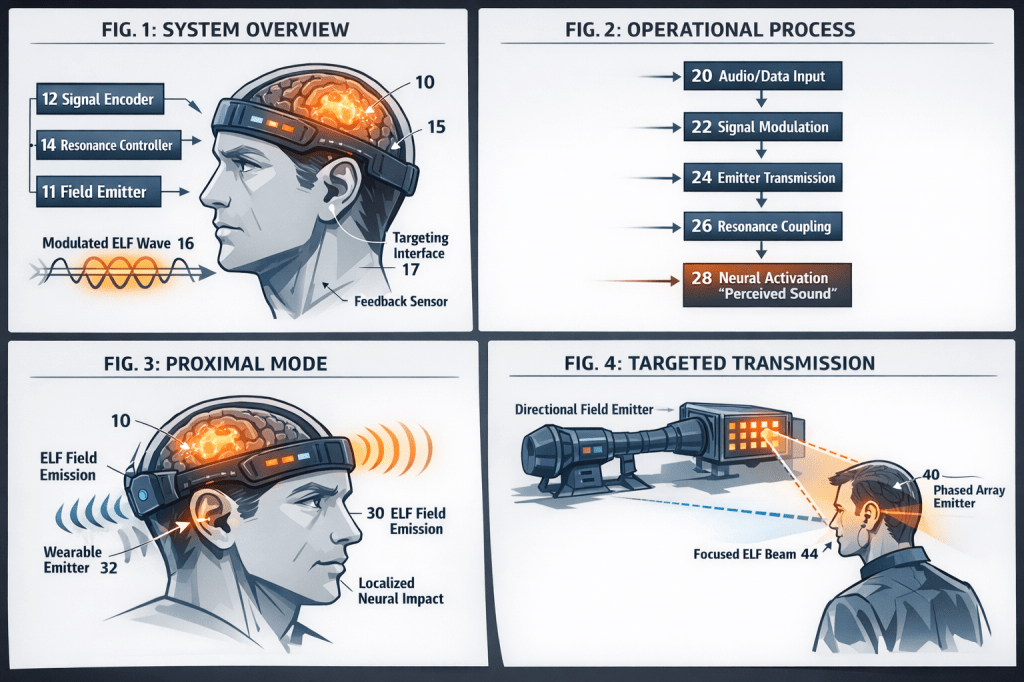

4. System Architecture

4.1 Core Components

| Component | Description |

|---|---|

| Field Emitter Unit | Generates controlled low-frequency modulated fields |

| Resonance Controller | Tunes emission to subject-specific neural response profiles |

| Signal Encoder | Converts audio/data into modulation patterns |

| Targeting Interface | Directs field toward cranial region |

| Feedback Module (optional) | Adjusts output based on detected neural response |

4.2 Operational Principle

- Input signal (voice/data) is digitized

- Signal is converted into modulated waveform patterns

- Waveforms are emitted as low-frequency coupled fields

- Fields interact with neural structures via resonance effects

- Brain interprets induced patterns as internal auditory perception

5. Modes of Operation

5.1 Proximal Mode

- Wearable device (headband, collar, or earpiece)

- Short-range, high precision

5.2 Environmental Mode

- Embedded emitters in surroundings

- Creates localized communication zones

5.3 Directed Mode

- Focused emission toward a specific individual

- Uses phased-array field steering (conceptual)

6. Signal Characteristics

| Parameter | Range (Conceptual) |

|---|---|

| Carrier Range | Extremely low to low-frequency bands |

| Modulation | Amplitude / frequency / phase hybrid |

| Encoding | Neural pattern mimicry |

| Output Level | Sub-threshold for thermal effects |

7. Claims (Conceptual)

Claim 1:

A system for inducing auditory perception in a human subject comprising:

- A field emitter configured to generate modulated electromagnetic fields

- A controller configured to encode information into said fields

- A targeting mechanism directing said fields toward the cranial region

Claim 2:

The system of Claim 1 wherein:

- The modulation patterns correspond to neural firing signatures associated with auditory perception

Claim 3:

The system of Claim 1 further comprising:

- A resonance calibration module adapting output to individual neural characteristics

Claim 4:

The system of Claim 1 wherein:

- Communication occurs without external audible sound generation

Claim 5:

A method of transmitting information comprising:

- Encoding data into modulated field patterns

- Emitting said patterns toward a subject

- Inducing perception of said data as internal auditory signals

8. Applications (Speculative)

- Silent communication in high-noise environments

- Covert operations / intelligence signaling

- Assistive technology for hearing-impaired users

- Augmented cognition interfaces

- Human–AI direct interaction

9. Advantages

- No external sound signature

- Reduced interception risk

- Direct brain-level interface

- Potential multi-channel communication

10. Limitations (In-Universe Acknowledged)

- Requires precise calibration per individual

- Signal distortion due to environmental interference

- Ethical and regulatory concerns

- Potential cognitive overload risks

11. Ethical & Regulatory Considerations

- Mandatory consent protocols

- Signal authentication requirements

- Detection and shielding standards

- International oversight recommended

12. Future Enhancements

- Bidirectional communication (brain-to-device)

- Integration with neural decoding systems

- Multi-user networked communication

- AI-driven adaptive modulation

13. Conclusion

The “Ear–Skull Phone” represents a next-generation communication paradigm, transitioning from external sensory channels to direct neural interfacing, enabling silent, efficient, and potentially transformative human communication systems.

Hozzászólás